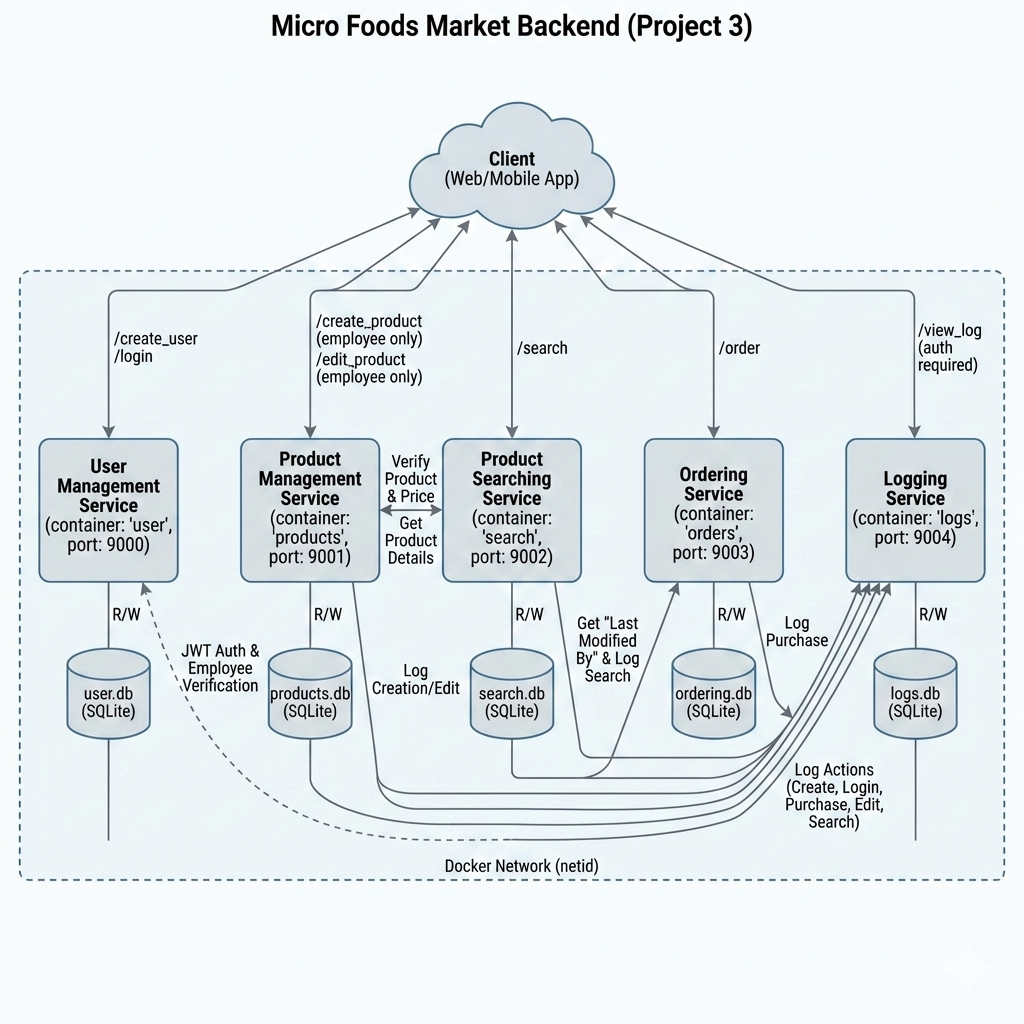

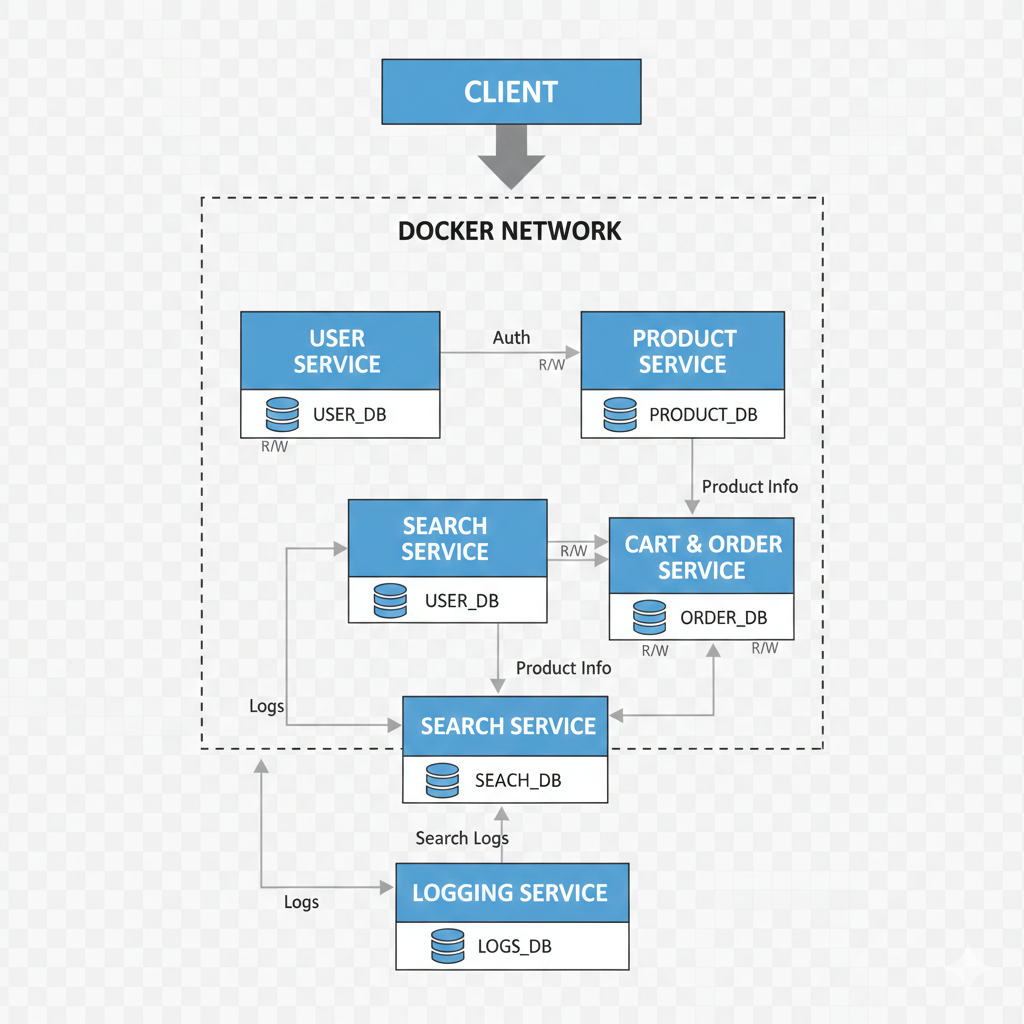

System Architecture

The system was deployed as five separate Docker containers, communicating over a shared bridge network. This separation enforced decoupling and established clear API contracts between services.

1. User Management

Handles user creation, login, password hashing, and JWT generation.

2. Product Management

Manages product state. Only employees may modify products.

3. Product Searching

Queries products and retrieves metadata via the Logging Service.

4. Ordering

Processes customer orders and calculates final totals.

5. Logging

Centralized sink for all successful system events.